Containers are commonly used in enterprise deployments, Software Containers (in Docker and other formats) provide special security issues because of the size, and flexibility of the container operating environment. Container deployments frequently involve a quick DevOps process, which, combined with the use of numerous open-source components, necessitates strict process governance beginning with the development stage.

Here Scanning/Securing the Images becomes the Crucial element with Secure CI/CD Pipelines

for more refer to the Webinar to take a look at how we can implement and automate container security with DevOps practices.

Containerization: How to Get Started?

Most of the individuals I speak with are anxious to take advantage of the many advantages that containers provide, but they are unfamiliar with how to use them. They may have one or two container-based systems on-site or in the cloud, but no defined strategy.

It’s easy to get amazed about containers because of how disruptive they are bringing the concept of “Unix” into an easy move. However, I recommend that people explicitly state their short and long-term goals, as well as allow enough time to adjust to containers and the society that surrounds them.

Why Choose Containers?

The following are the primary reasons why DevOps teams want to start using containers:

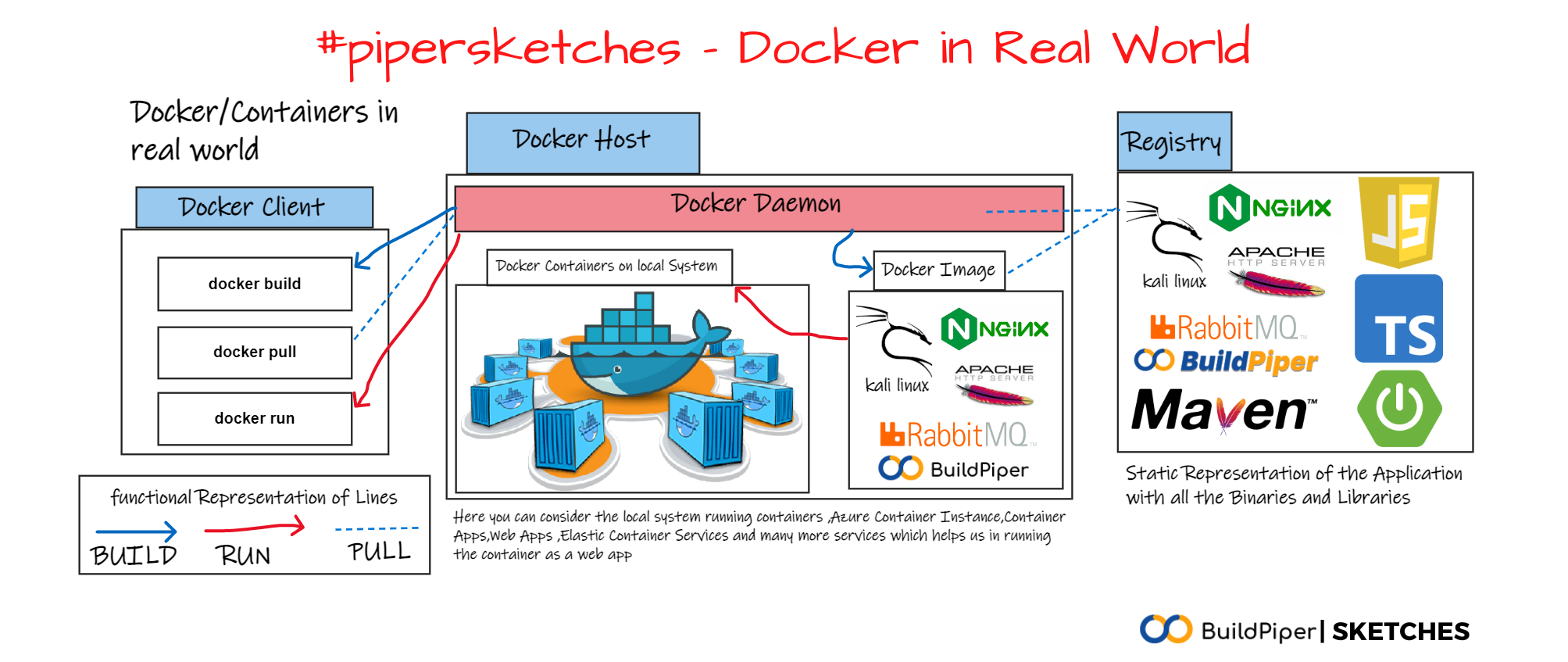

- Accelerate application development and deployment: Containers are lean, light, and fast, and you can get started quickly by utilizing boilerplate images based on popular open-source projects. Once your work is complete, you can package it as a container image, ship it, and run it in production the same way you would in development. Things may get even faster if you add automation to the process, such as CI/CD technologies.

- Move programs to the Cloud: Containers’ mobility is another really valuable feature. Containerization isolates an application from the host operating system and infrastructure. Without converting deployment formats or changing a line of code, the same container may run on-premises or in the cloud (private or public).

- Make the switch to Microservices: Containers are often single-purpose, single-process, which fits well with microservices designs. Microservices applications are easier to design and upgrade than large monolithic apps. Containers are the ideal platform for microservices, and the ecosystem that has grown up around them makes it possible for even the largest companies to scale a microservices approach.

Schedule a demo to explore BuildPiper which is one of the best tools for CI/CD available in the industry today and its other interesting features! Contact us NOW!

Advantages of Containers and how to start having them!

There are several advantages to containerizing your apps, but where do you begin? Is it better to convert your historical monolithic program into contemporary microservices containers, or should you only containerize the “new stuff”?

So, how about starting from scratch and containerizing an old legacy program rather than rewriting it into microservices? When you containerize a traditional, monolithic program that wasn’t developed for containers, you lose some of the advantages of microservices apps, particularly the simplicity of maintenance and update possibilities, but there are still a lot of advantages to consider.

Containerizing your app allows you to bring containers into the environment and familiarize your team with them while organizing teams and building processes that will help them migrate to a microservices-based approach more smoothly.

Finally, you’ll want to have a single pipeline and toolchain that uses containers to package new microservices and manage older applications. You can normalize all processes around containers this way, even if you’re running legacy monolithic programs within them. The advantage of this technique is that you can start bolting microservices onto your existing application, making any new feature microservice-based.

The lift and shift use case, as it’s known in DevOps jargon, is one hybrid technique that’s been getting a lot of attention recently. The term “lift and shift” refers to the practice of containerizing an on-premises monolithic application to move it from one data center to another (usually into a modern public or private cloud).

Lift and shift, as one speaker/guest pointed out in AWS Online Tech Talks, can and should be more than simply a mode of transportation. It offers the foundation for moving to a microservices approach and introduces containers to the environment in a controlled manner. As a result, it’s quickly become a popular method for introducing DevOps teams to containers. Even if it’s just utilized to give a restricted set of benefits, it might be a quick win for those looking to demonstrate concrete progress on a container strategy.

A complete rewriting into microservices is a major step if your objective is to re-architect a legacy app utilizing containers. There are other intermediary phases, like restructuring the software into multiple bigger chunks—what we call “microservices” or else “Happy monoliths” this I learned from a reactor talk from Rajat Khare. It will also bring certain advantages and allow you to progress towards full microservices over a course of time.

When deciding what to containerize first and why DevOps teams should think about forming partnerships with external stakeholders that may assist support subsequent innovation. Given that my blog’s name is “DevSecOps” it should come as no surprise that I believe security teams should be at the top of the list since they have the potential to be a very strategic and valuable partner to DevOps.

Regardless of the limitations that exist between security and DevOps (some of which I discussed in my earlier essay), DevOps’ collaborative attitude may be a powerful draw for security professionals. Not only can the security team be a valuable ally, but they may also provide insights into security/IT risk factors that might support a DevOps-driven business case for containerizing a certain app.

That’s why I geek a lot about “Toyota Production System” – here are the posts which says about my love for Toyota Production System.

At the end of the day, no one-size-fits-all solution exists for the “rebuild or containerize the existing app?” conundrum. That’s why early victories are so important—whatever you decide to do, make sure you plan and set reasonable goals.

I’m sure you’re already a fan of containers as part of the DevOps team I am a fan of DevOps and containers as part of the DevOps team, given how they’ve alleviated the agony of environment-related setup difficulties and decreased your infrastructure requirements by being so much lighter than full-blown VMs. However, the very feature that makes them so light – sharing the host’s kernel – also makes them vulnerable to security threats.

It’s crucial to remember that much of the container security scaremongering stems from the technology’s relative youth — Docker is only roughly 3 years old, after all! There is still ongoing work being done to patch possible flaws in container engines, as well as third-party products that improve container management and security.

You may, however, take efforts to improve the security of your containerized environments:

Own It!

If security is a hot load that is handed down the chain, rapid DevOps deployments will fail. “Who owns container security in your organization?” we asked delegates in a recent webinar. — Security teams, DevOps teams, shared duties, and no one/unknown received the most votes.

In most circumstances, a shared model is the best option, but roles must be clearly defined and not ambiguous.

Frequently scan

A container image contains all of its dependencies, but what if they are insecure? On the market, there are various Image scanning solutions, some of which are free or open-source. Applying a vulnerability management system that performs the following is recommended practice:

- Before the Image is published to the registry, it is scanned as part of the construction process.

- While in the registry, it continues to scan the Image, ensuring that your stored photos are not affected by emerging vulnerabilities.

- Vulnerabilities in operating containers are tracked. When new vulnerabilities are found, make sure you have the tools in place to see if they are putting your environment at risk.

- It’s a good idea to invest in a specialized container scanning solution, such as Aqua, Snyk, and many more if you have an active image pipeline.

Whatever method you use, scan your Docker Images periodically!

To Automate most of the CI/CD Processes to scan your docker Images and a solution for fast and easy Kubernetes Deployment contact us

Always Annotate the Artifacts

Artifacts make a huge demand in the professional world they run on the environment, they are still the representation of what security means to us, and also what security can bring to the table here is that you know what is the version of the software that is being used, what were the bugs that were faced in the deployments and also how much was the performance-optimized and also the other factors which will define the way in which the container was built and how they were deployed using the automation and DevOps techniques.

Also annotating the artifacts from the container will say the build quality and you can evaluate

Privileges are revoked.

“Tremendous power comes at a cost of taking great responsibility that is consistent” is an adage that applies to both superpowers and root privileges. The Docker daemon is the only component of Docker that requires root access; therefore, you’ll want to limit who has access to it. Ascertain that Docker admin users have access appropriate to their roles. Users having “Auditor” access to Docker Daemon will only be able to inspect container logs, for example, thanks to solutions like Aqua, Snyk, SonarQube, and many more.

For more support about the integration of the BuildPiper CI/CD concerning other tools contact us

remember this I always say this “before the world wakes up with all the blessings and privileges hit the market” so now “before a Hacker exploits the vulnerability solve it”

You should also ensure that containers do not run with “root rights”, in addition to withdrawing access from users. There’s always the possibility of container breakout, so make sure you don’t end up compromising all of the other operating containers, as well as the host system. Docker now supports User Namespaces in version 1.10 onwards, allowing a container’s root user to map to a non-root host system user. This functionality isn’t turned on by default, so you’ll have to activate it.

Keep secrets hidden

We’re generally concerned about containers having access to other containers or the host system when it comes to container security. Compromised secrets, such as API tokens, private keys, and usernames/passwords, might, nevertheless, provide hostile parties access to external services outside your containerized environment. Container images that reveal your secrets are always perilous, but they become much more dangerous when published on Docker Hub/Registry.

The widely used ‘solution’ of storing secrets in environment variables is similarly insufficient, as environment variables may be readily leaked or written to log files. When you docker run your images, it’s best to add secrets at run-time. Using a dedicated hidden storage service, such as Vault, is much better.

Remove any components or Images that are no longer in use.

It’s easy to lose track of all your containers, especially if you’re running a cluster, and wind up running older versions of your images that expose vulnerabilities or have vulnerable components that you’ve previously repaired.

Using a content management solution, such as Kubernetes, and old-fashioned housekeeping to ensure you’re constantly eliminating out-of-date versions of your images and their dependencies are the two sides of risk mitigation here. Use automation instead of SSH.

Installing SSH daemon within containers should be avoided at all costs! Consider whether you could automate these processes instead of SSHing into the container to do normal jobs — bash scripts and a user-level cron in the simplest scenario.

Although it may seem like a loss of control, shutting off SSH is one of the most effective protections against bots and human attackers.

Conclusion

These are some proactive security measures you may take to protect your containerized settings. However, Docker has only been around for a short period, and its built-in management and security capabilities are still in their infancy. That’s why investing in a specific solution like Aqua, which ‘fills the gaps’ and assures that your containers are as safe as dedicated VMs, is a fantastic option.

Also, Refer to one of the blogs which refer to Securing Your Containers- Top 3 Challenges!